GridSearchCV(cv=5,

estimator=Pipeline(steps=[('col_tf',

ColumnTransformer(transformers=[('num',

Pipeline(steps=[('num_impute',

SimpleImputer())]),

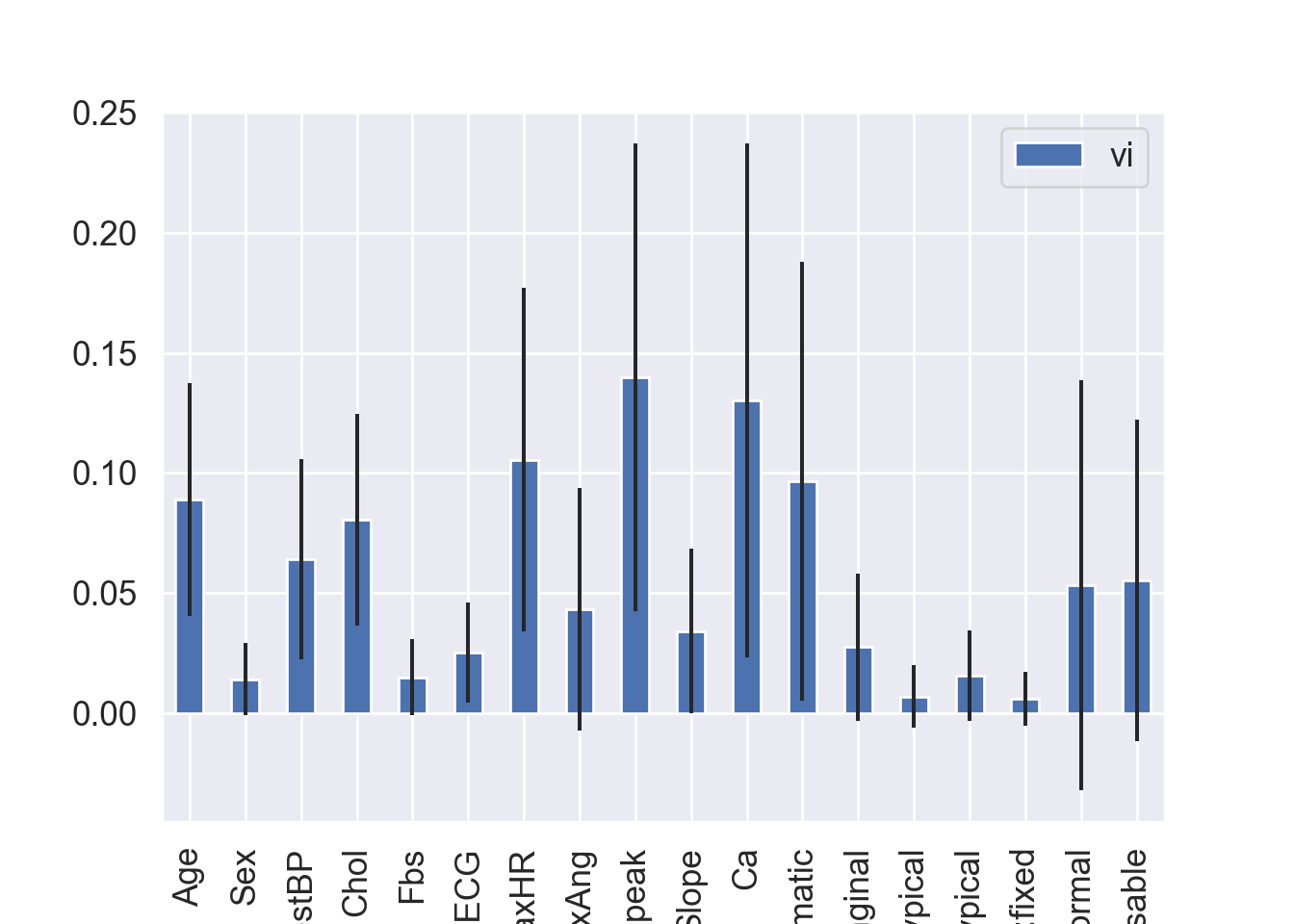

['Age',

'Sex',

'RestBP',

'Chol',

'Fbs',

'RestECG',

'MaxHR',

'ExAng',

'Oldpeak',

'Slope',

'Ca']),

('cat',

Pipeline(steps=[('cat_impute',

SimpleImputer(strategy='most_frequent')),

('encoder',

OneHotEncoder())]),

['ChestPain',

'Thal'])])),

('model',

RandomForestClassifier(oob_score=True,

random_state=425))]),

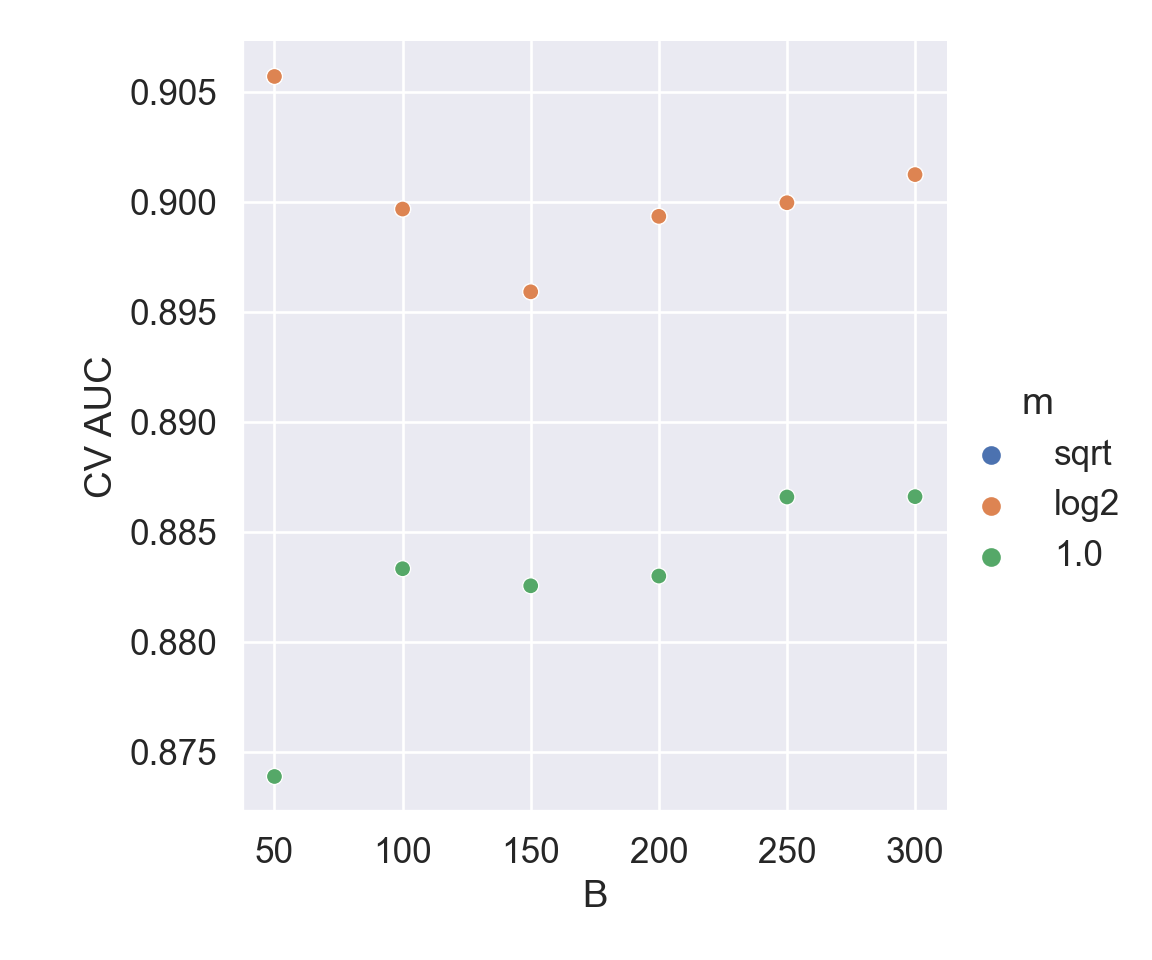

param_grid={'model__max_features': ['sqrt', 'log2', 1.0],

'model__n_estimators': [50, 100, 150, 200, 250, 300]},

scoring='roc_auc')In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook.

On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.